New Pub: Towards Automatic Collaboration Analytics for Group Speech Data Using Multimodal Learning Analytics

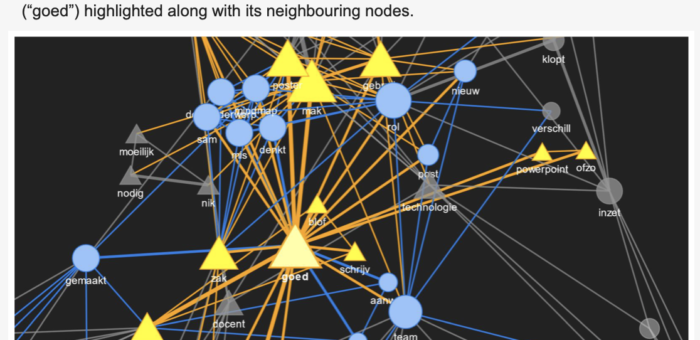

Collaboration is an important 21st Century skill. Co-located (or face-to-face) collaboration (CC) analytics gained momentum with the advent of sensor technology. Most of these works have used the audio modality to detect the quality of CC. The CC quality can be detected from simple indicators of collaboration such as total speaking time or complex indicators like synchrony in the rise and fall of the average pitch. Most studies in the past focused on “how group members talk” (i.e., spectral, temporal features of audio like pitch) and not “what they talk”. The “what” of the conversations is more overt contrary to the “how” of the conversations. Very few studies studied “what” group members talk about, and these studies were lab based showing a representative overview of specific words as topic clusters…